We've all had that "brilliant" idea at least once—a product, business, or startup that seems destined for success. That moment of inspiration, the confidence of intuition, and suddenly you're imagining the profits this project will bring.

But an important question arises: what if your intuition is wrong?

Relying solely on gut feeling is risky. Blind confidence in an idea can cost you not only financially but also something far more valuable—time. Even the brightest, most promising ideas can fail if they don't solve a real problem or address market needs.

So how do you know if your idea is worth pursuing further? How can you make sure your potential "next big thing" actually has a chance of success before spending months or even years on it?

According to leading business analysis platforms, one of the main reasons startups fail is the lack of demand for their product. The problem is that many startups create products or services that do not meet the needs or expectations of the people they are intended for.

Put simply, many founders start with product development rather than understanding their audience. They base decisions on assumptions rather than facts, creating what they think people need instead of figuring out what people actually need.

Let's explore practical ways to test your idea—methods that might not guarantee 100% success but significantly reduce risks and help you make more informed decisions before launching a product.

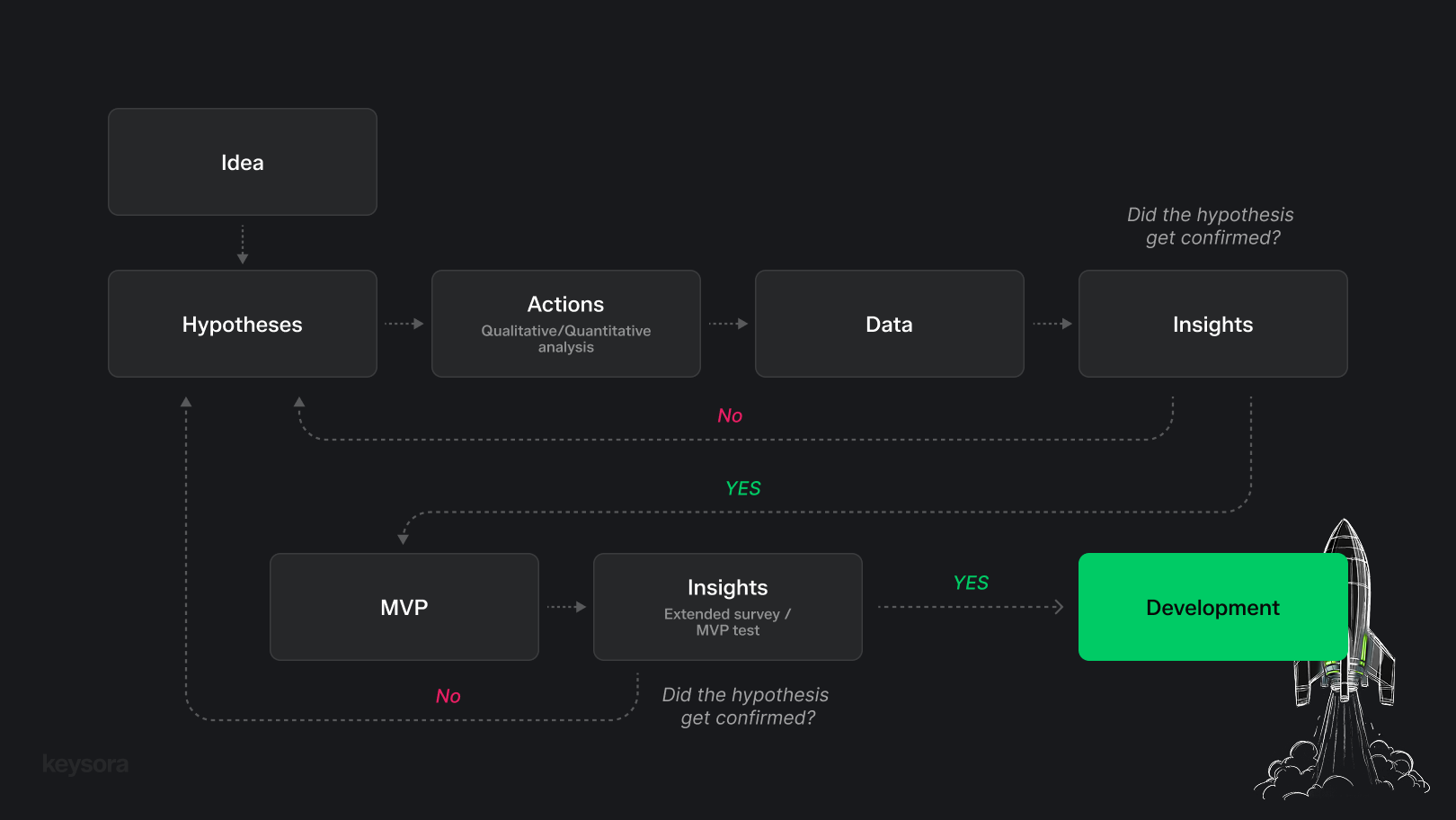

To make informed decisions, we need objective data. We can obtain this by systematically testing our hypotheses through qualitative and quantitative analysis. The process can be broken down into several steps:

- Formulating the idea/hypothesis — clearly describing what exactly we want to test.

- Actions — defining the target audience and testing the hypothesis.

- Data collection — gathering and analyzing qualitative and quantitative data.

- Conclusions — evaluating results and determining whether the hypothesis is confirmed or not.

How to formulate a hypothesis

Often, our "genius" ideas remain too general—lacking specificity, details, and clear direction. In practice, evaluating the viability of such an abstract idea becomes difficult. Any big idea can be broken down into small, understandable, and testable hypotheses.

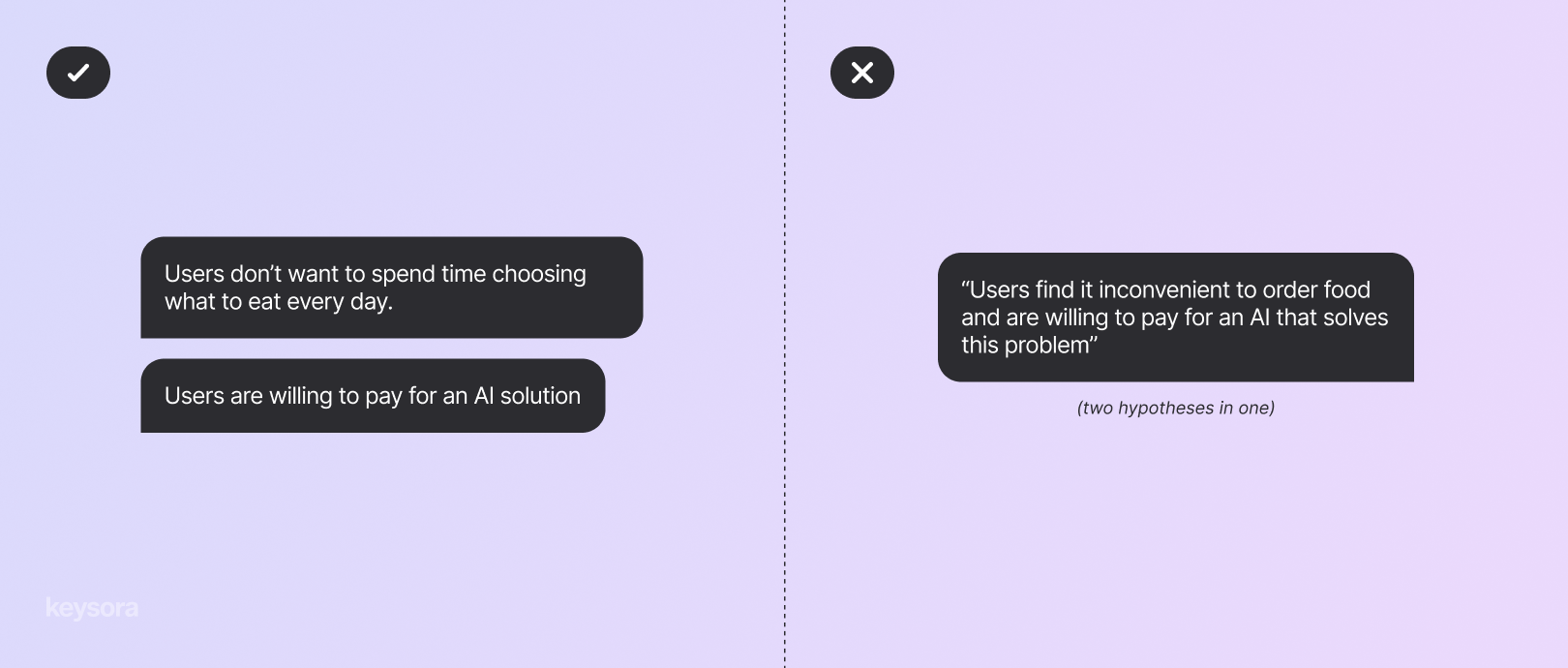

There's a simple rule you can use to formulate testable hypotheses:

One hypothesis should contain only one testable statement.

This helps avoid confusion, prevents mixing different thoughts together, and allows you to understand exactly what worked and what didn't. Here's an example:

Example of the initial idea:

"Creating a service that helps people get food through a subscription. The service uses artificial intelligence for automation and personalization. The system itself determines when and what to deliver, with high demand and revenue expected because people find it convenient to receive food without extra hassle, and many are willing to pay for regular delivery."

At first glance, this sounds good, but it's too broad a statement. In this formulation, it's impossible to understand who exactly these "people" are, what's being offered to them, and why they should want it.

A product hypothesis in working format usually consists of three parts:

- Who — specific audience

- What — action, feature, or solution

- Why/Expected result — what effect we want to achieve and what metric we'll use to measure success

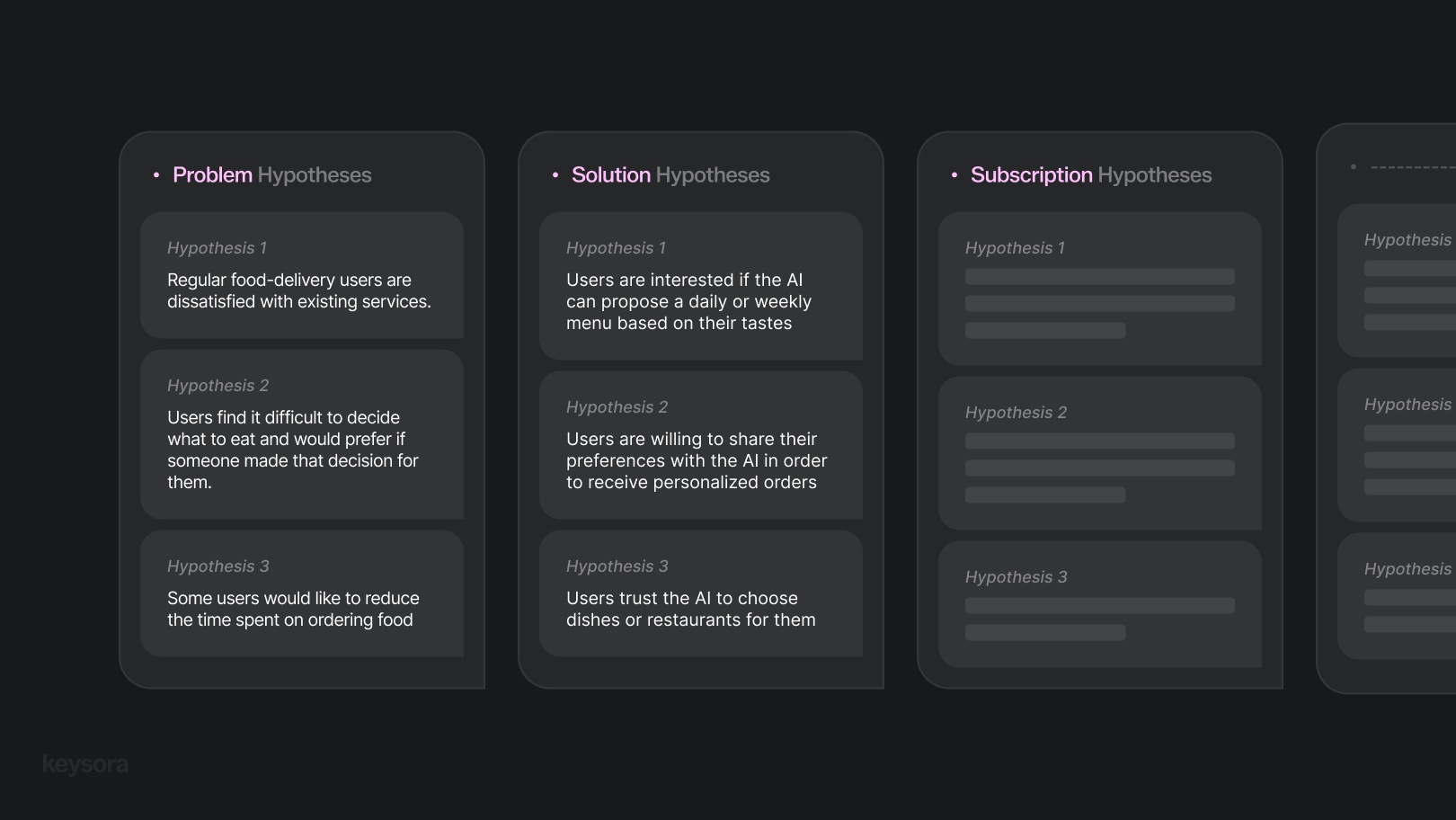

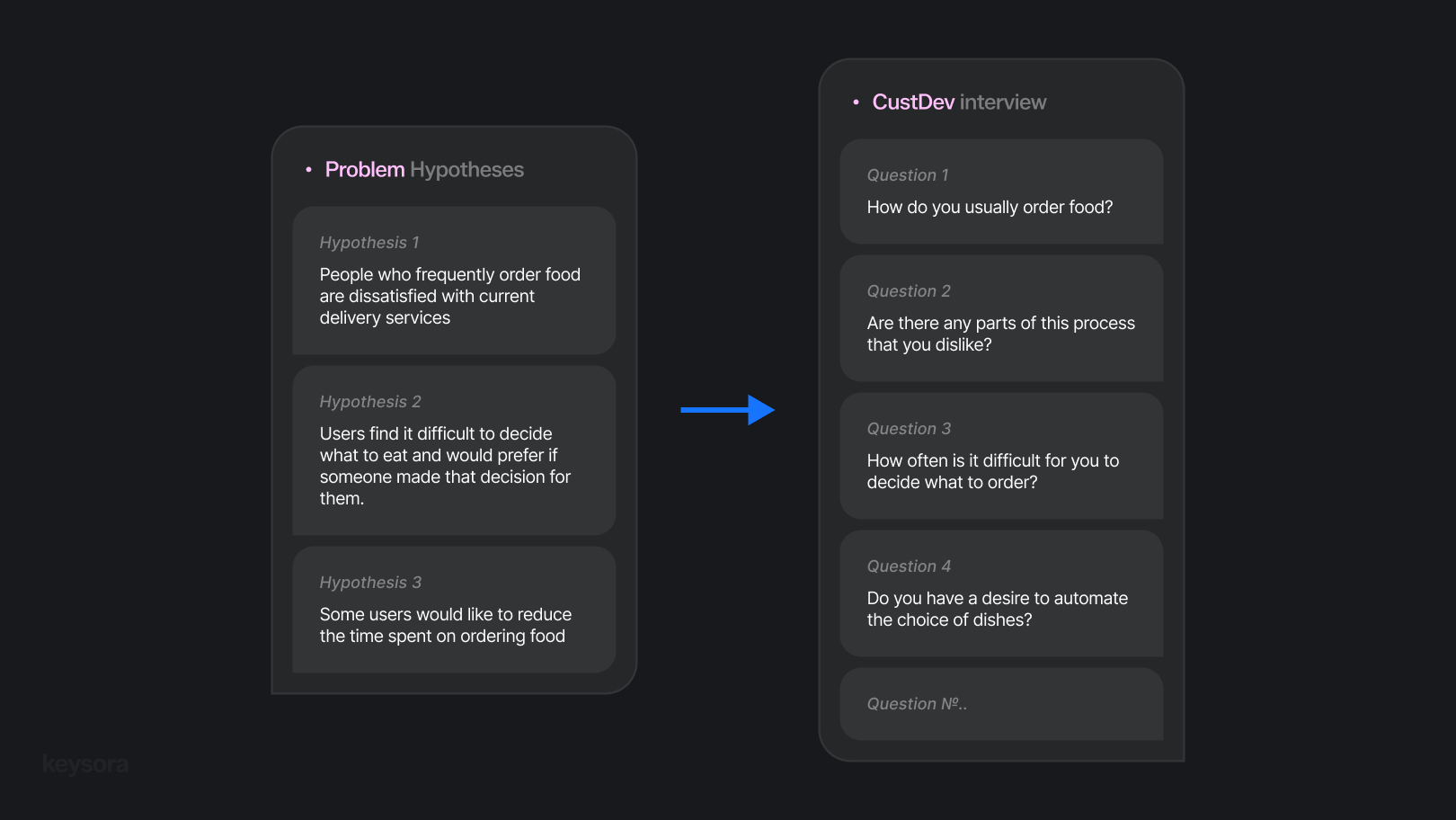

Therefore, a big idea needs to be broken down into specific, testable hypotheses. For example:

- "People who frequently order food are dissatisfied with current delivery services (e.g., inconvenient dish selection, long wait times, high fees, etc.)."

- "Users struggle to decide what to eat and would like someone to decide for them."

- "Some users would like to reduce the time spent ordering food (not opening different apps, not choosing each time)."

Each such hypothesis is narrow, understandable, and testable. And this is exactly how—step by step—we turn an abstract idea into a list of research hypotheses.

How to define target audience

To preliminarily define the target audience, it's important to understand who is most likely to encounter the problems described in the hypotheses. If we're talking about a product that uses AI to select menus and order food via subscription, it's logical to imagine people who frequently struggle with deciding what to eat and regularly use delivery services.

From such assumptions, we can identify several potential segments:

- Office workers — often order food and don't want to spend time choosing

- Young professionals living alone — value convenience and don't want to cook

- Students and youth — actively try new technologies and services, seek simple solutions

The next step is to check which of these segments actually experiences significant "pain": who orders food more frequently, is dissatisfied with existing services, is open to new formats and the subscription idea.

Important to remember: at this stage, we're only assuming who our audience might be. More precise understanding comes after hypothesis testing or later—through JTBD (Jobs to Be Done) deep-dive interviews when the product has already started interacting with real users.

JTBD helps uncover true behavioral motives—those that aren't always obvious on the surface. Data obtained from such interviews can then be translated into specific product solutions: functionality improvements, new features, positioning adjustments, etc.

We'll try to write in more detail about how to conduct JTBD and use the results in separate posts.

How to test hypotheses

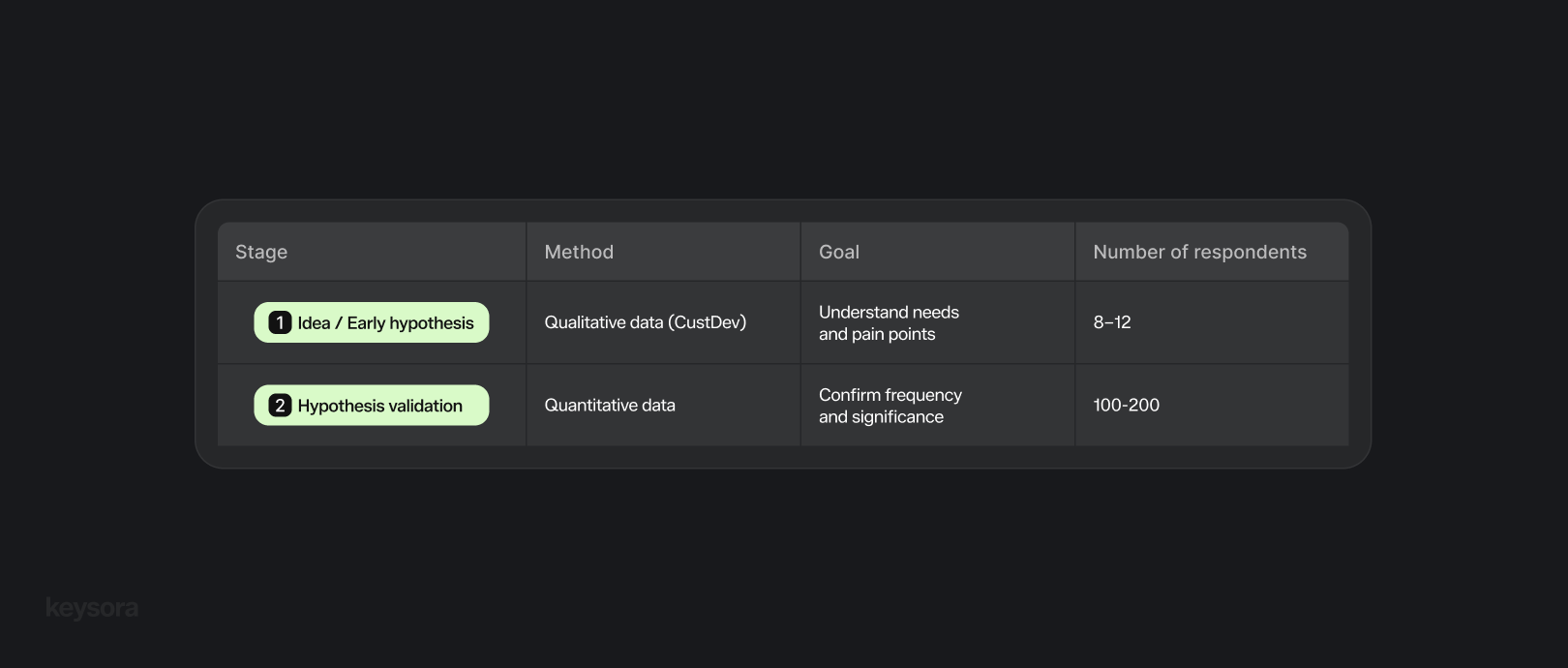

From a methodological perspective, research is typically divided into two basic types: qualitative and quantitative.

- Qualitative research studies why and how people act. This includes interviews, observations, and in-depth conversations.

- Quantitative research measures how much and how often something happens. This includes surveys, metrics, A/B tests, and other statistical methods.

In practice, qualitative methods are usually applied first to better understand user problems and motivations. After that, quantitative research is used to confirm and support the conclusions with numbers.

For qualitative analysis, we'll use Customer Development (CustDev). This approach helps understand customers: their problems, needs, and motives before and during product creation. Startups and product teams actively use CustDev to avoid creating products "blindly" and instead build what users actually need.

How to find respondents

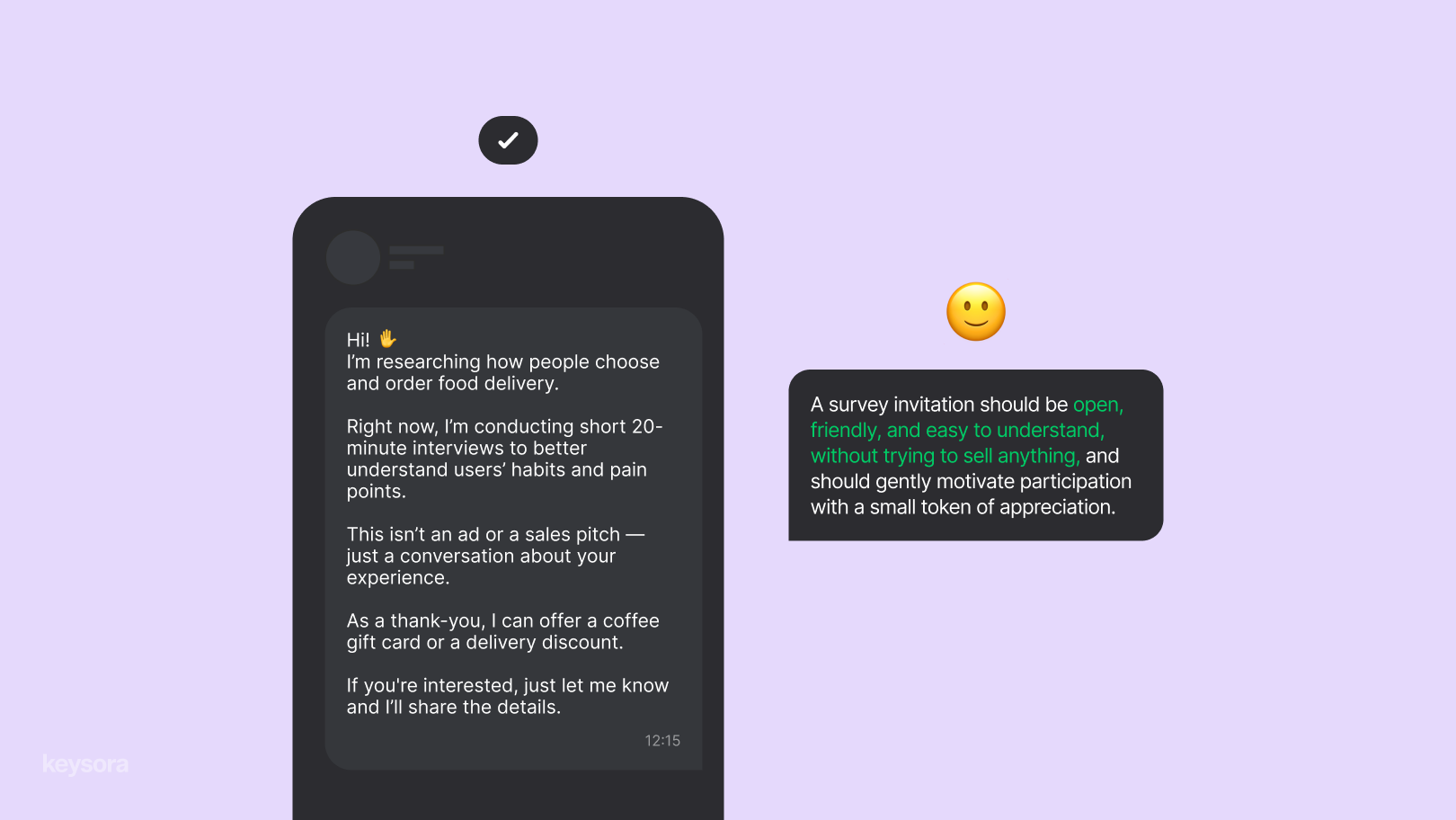

After formulating a list of hypotheses and defining the target audience, the next step is finding respondents. The main goal is to find real representatives of the target audience, not just "any people," and invite them for interviews.

Where to look:

- Personal network and social media — the first simple channel for hypothesis testing

- Online communities and chats — thematic channels, Reddit groups, Telegram, and other platforms

- Offline — approach people and ask a couple of questions about the hypotheses; invite interested ones for full interviews

- Specialized agencies and online platforms — help assemble focus groups

Most often, social media and thematic channels are used. As motivation for participants, you can offer a small bonus: a promo code, certificate, discount, or even a symbolic gift like coffee.

Number of respondents

For CustDev research, the depth and quality of interviews matter more than quantity. Typically, after 8-10 interviews, repeating patterns begin to emerge in responses, and additional meetings stop providing significant new information. When this happens, the interview stage can be considered complete.

CustDev principles

To formulate proper CustDev questions, it's important to follow several simple but critical principles.

First, questions should be neutral—without hints toward desired answers. The interviewee shouldn't feel like they're expected to give a "correct" response.

Second, rely only on facts from the past, not fantasies about the future. Instead of "Would you buy?" ask: "When was the last time you encountered this?" or "How did you solve it?"

Third principle — focus on behavior, not opinions. What matters are real actions, tools, and habits, not someone's musings about "how nice it would be."

Always extract specific examples—recent situations, real cases. If there's no frequent or recent case, there's likely no real "pain."

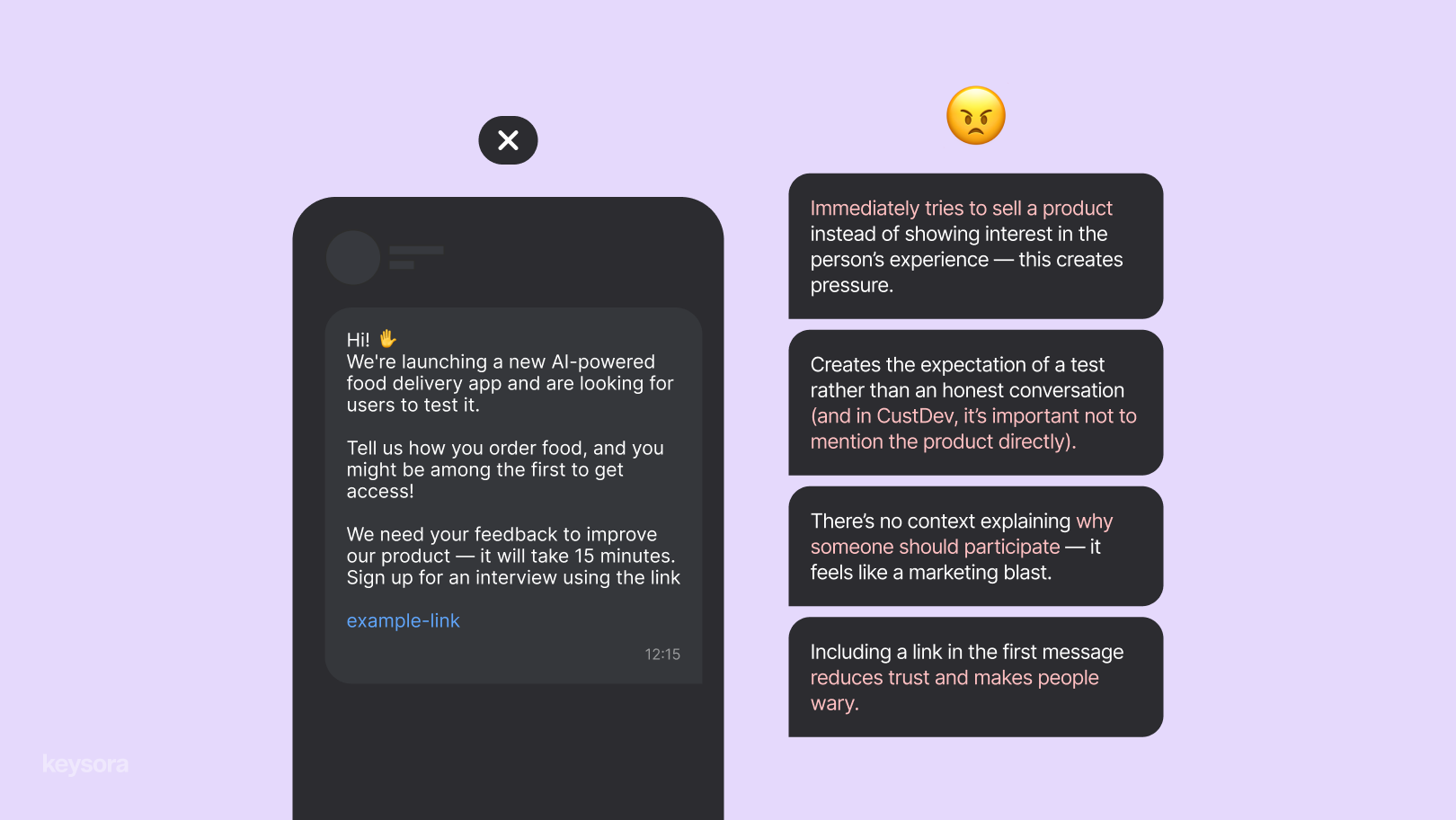

What not to do:

- Don't ask leading questions or inquire about the future—this yields false answers.

- Don't pitch your product during the interview—otherwise the person will start saying what you want to hear.

- Don't seek hypothesis confirmation or ask "Do you like the idea?"—this doesn't reveal real behavior.

- Don't turn the conversation into a questionnaire: CustDev is a live dialogue.

How to conduct CustDev

After finding respondents, schedule interviews. The format can be anything: offline meeting, Zoom, phone call.

Start the interview with 1-2 minutes of casual conversation to help the person relax. Focus on the respondent's actual experience, avoiding hypothetical questions and not influencing their opinion. Don't sell your idea or seek hypothesis confirmation—even if responses don't match your expectations.

The main task is to ask questions according to the interview structure, listen carefully, and record responses. Optimal duration: 30-40 minutes.

After a series of interviews, save all notes and data in a single document for further analysis and pattern identification.

How to conduct quantitative analysis

Quantitative analysis helps check how widespread insights are among a broader audience—in other words, how many people think similarly.

Based on CustDev data, create a short questionnaire of 5-10 questions. Questions should focus on behavior and motivation, not the product itself.

You can create the survey via Google Forms, Telegram, or other convenient tools, and distribute it through social media, thematic communities, and chats.

- Typically, 100-200 respondents are sufficient for basic validation.

- For convincing and statistically reliable results, 500+ respondents are needed.

Example:

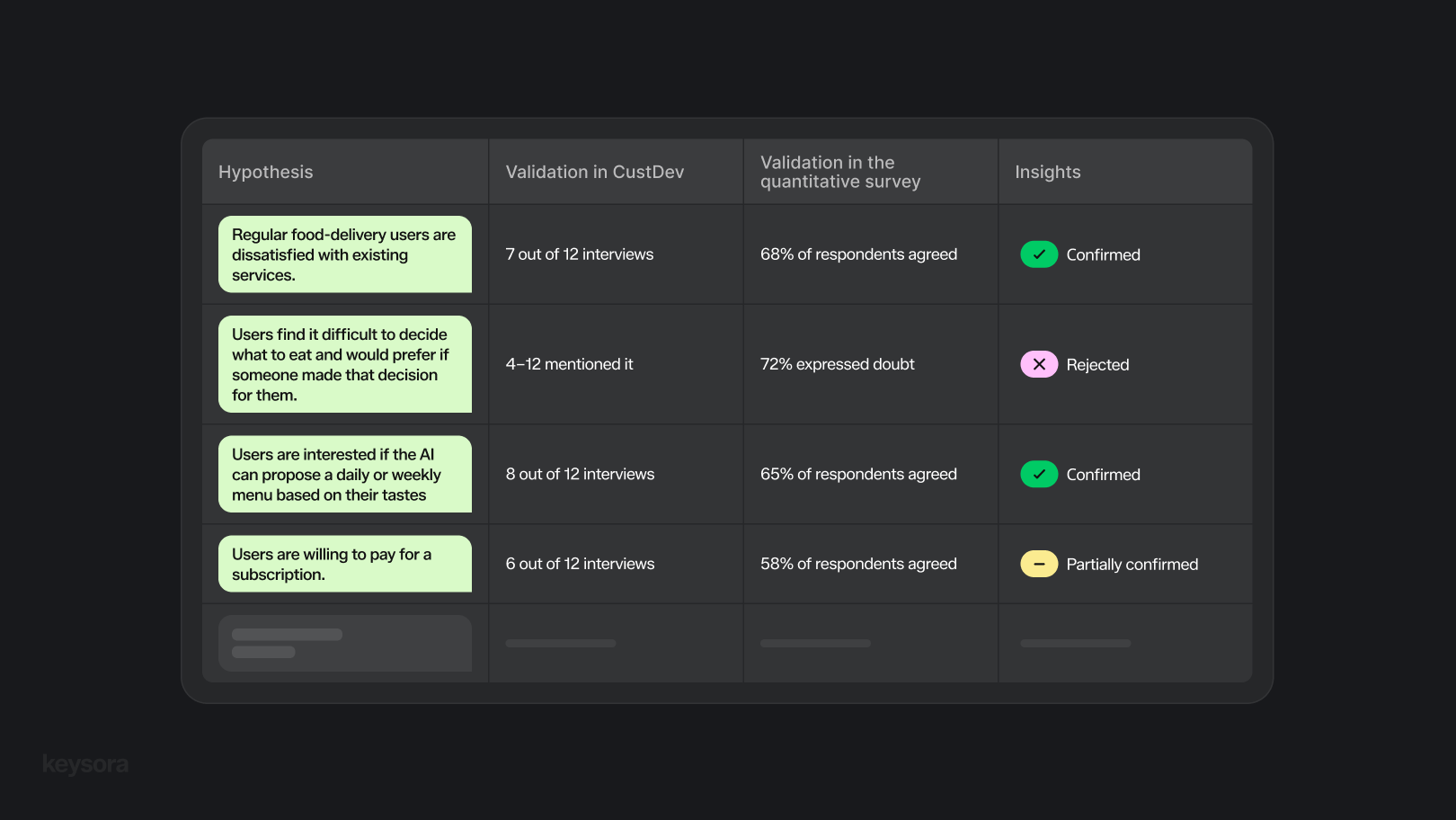

After 12 CustDev interviews, it turned out that 66% of users were interested in whether AI could suggest daily or weekly menus based on their tastes. The next step is to create a short survey (5-10 questions) with 200 people to verify whether 66% of the audience actually shares this opinion.

Data Analysis - Conclusions

After conducting CustDev interviews and quantitative surveys, our task is to combine these two information sources and gain final insights about the hypotheses.

Analyzing CustDev interviews

- Review notes and recordings.

- Identify repeating problems, motives, and phrases appearing across multiple respondents.

- Record not only direct answers but also indirect signals: emotions, inconveniences, problem-solving methods.

- Group repeating observations by themes: for example, "difficulty choosing food," "inconvenience of services," "interest in AI recommendations."

Checking quantitative data

- Check what percentage of the audience encounters each problem or shows interest in the solution.

- CustDev shows depth and motives; quantitative analysis shows frequency and scale.

Comparing the two sources

Compare themes from CustDev with their frequency in the survey.

Example: In CustDev, 7 out of 12 people complained about difficulty choosing dishes, while in the survey of 200 people, 65% confirmed this. The problem is confirmed and significant. Discrepancies signal the need for additional validation or hypothesis refinement.

Formulating conclusions

- Note which hypotheses were confirmed, which weren't, and which require additional research.

- With contradictory data, plan additional steps: new interviews, clarifying surveys, or MVP testing.

What to do if the hypothesis is not confirmed

If after data analysis the hypothesis isn't confirmed, it's not a failure—it's valuable information. It shows that your assumptions don't match real user needs and allows you to adjust strategy before resources are spent on product development.

What to do next:

- Re-examine the hypothesis — maybe it was formulated too broadly or incorrectly. Break it down into narrower, more specific hypotheses.

- Look deeper at the data — sometimes "non-confirmation" hides new insights: motives, inconveniences, alternative needs.

- Adjust the product or offer — use the obtained data to adapt features, format, or target audience.

- Test a new hypothesis — repeat CustDev or quantitative surveys to clarify and confirm new ideas.

The main thing is to view every negative result as an opportunity to better understand your audience and reduce risks before product launch.

What to do if the hypothesis is confirmed

If the hypothesis is confirmed, it means your idea actually solves a problem for the target audience. This isn't a reason to immediately scale the product, but rather a signal that you can proceed further along the validated path.

What to do next:

- Solidify insights — document exactly what data confirmed the hypothesis: who the target audience is, what problems are being solved, and how users respond to your solution.

- Develop and improve the product — use confirmed hypotheses to create functionality, interface, or service.

- Prioritize next hypotheses — move to the next important hypothesis to gradually reduce risks and test key product elements.

- Test scalability — after confirmation, you can test the product with a broader audience or increase reach.

Hypothesis confirmation is real evidence that you're moving in the right direction and creating a product users actually want to use.

How long does hypothesis validation usually take?

The number of hypotheses to test and the scale of research depend on the specific idea or startup. The more complex the product and broader the target audience, the more hypotheses need testing and the more extensive the research.

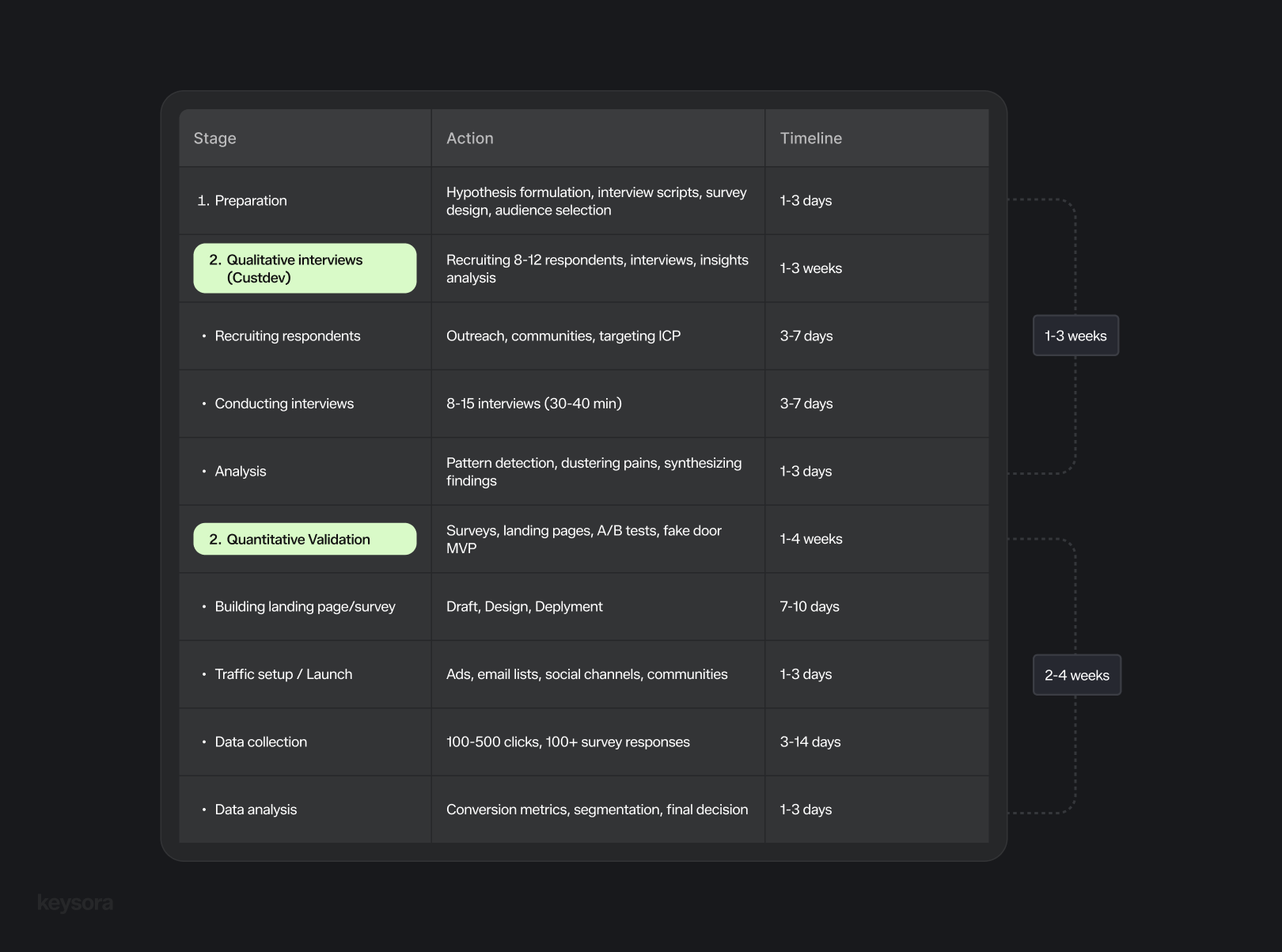

Speed and capabilities largely depend on how quickly you can find respondents and conduct interviews. The image below shows average timeframes for each stage.

Key points

1. Start with an idea, turn it into a hypothesis

- Break your idea into specific, testable assumptions.

- Identify who (your target audience), what (the action/solution), and why (the expected outcome).

2. Define your target audience

- Identify who might face the problem you're solving.

- Validate your assumptions through interviews and surveys.

3. Conduct qualitative research (CustDev)

- Do 30-40 minute interviews focused on real experiences (avoid hypotheticals).

- Record all notes and data for later analysis.

- 8-10 interviews are typically enough to find patterns.

4. Conduct quantitative analysis

- Create surveys based on your CustDev insights.

- Ask about behaviors and motivations, not directly about the product.

- Test how frequently the problem occurs with a larger group (100-200 for basic validation, 500+ for statistical reliability).

5. Analyze and draw conclusions

- Qualitative data: identify key problems and motivations, group by themes.

- Quantitative data: measure frequency and scale of problems.

- Compare both data types and note confirmed vs. unverified hypotheses.

6. Take action based on validation results

- If validated: Solidify insights, continue product development, test new hypotheses, explore scalability.

- If not validated: Revisit the hypothesis, analyze data deeper, adjust the product, test new ideas.